English | 简体中文

PP-PicoDet

News

- Released a new series of PP-PicoDet models: (2022.03.20)

- (1) It was used TAL/ETA Head and optimized PAN, which greatly improved the accuracy;

- (2) Moreover optimized CPU prediction speed, and the training speed is greatly improved;

- (3) The export model includes post-processing, and the prediction directly outputs the result, without secondary development, and the migration cost is lower.

Legacy Model

- Please refer to: PicoDet 2021.10

Introduction

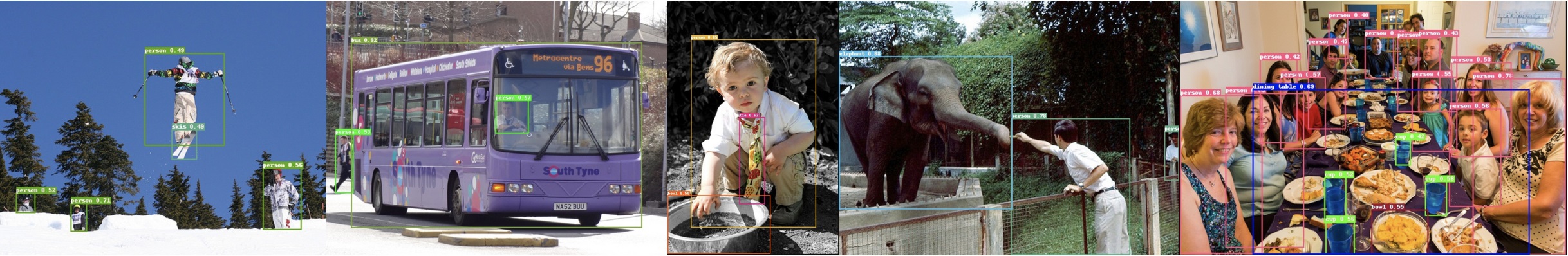

We developed a series of lightweight models, named PP-PicoDet. Because of the excellent performance, our models are very suitable for deployment on mobile or CPU. For more details, please refer to our report on arXiv.

- 🌟 Higher mAP: the first object detectors that surpass mAP(0.5:0.95) 30+ within 1M parameters when the input size is 416.

- 🚀 Faster latency: 150FPS on mobile ARM CPU.

- 😊 Deploy friendly: support PaddleLite/MNN/NCNN/OpenVINO and provide C++/Python/Android implementation.

- 😍 Advanced algorithm: use the most advanced algorithms and offer innovation, such as ESNet, CSP-PAN, SimOTA with VFL, etc.

Benchmark

| Model | Input size | mAPval 0.5:0.95 |

mAPval 0.5 |

Params (M) |

FLOPS (G) |

LatencyCPU (ms) |

LatencyLite (ms) |

Weight | Config | Inference Model |

|---|---|---|---|---|---|---|---|---|---|---|

| PicoDet-XS | 320*320 | 23.5 | 36.1 | 0.70 | 0.67 | 3.9ms | 7.81ms | model | log | config | w/ postprocess | w/o postprocess |

| PicoDet-XS | 416*416 | 26.2 | 39.3 | 0.70 | 1.13 | 6.1ms | 12.38ms | model | log | config | w/ postprocess | w/o postprocess |

| PicoDet-S | 320*320 | 29.1 | 43.4 | 1.18 | 0.97 | 4.8ms | 9.56ms | model | log | config | w/ postprocess | w/o postprocess |

| PicoDet-S | 416*416 | 32.5 | 47.6 | 1.18 | 1.65 | 6.6ms | 15.20ms | model | log | config | w/ postprocess | w/o postprocess |

| PicoDet-M | 320*320 | 34.4 | 50.0 | 3.46 | 2.57 | 8.2ms | 17.68ms | model | log | config | w/ postprocess | w/o postprocess |

| PicoDet-M | 416*416 | 37.5 | 53.4 | 3.46 | 4.34 | 12.7ms | 28.39ms | model | log | config | w/ postprocess | w/o postprocess |

| PicoDet-L | 320*320 | 36.1 | 52.0 | 5.80 | 4.20 | 11.5ms | 25.21ms | model | log | config | w/ postprocess | w/o postprocess |

| PicoDet-L | 416*416 | 39.4 | 55.7 | 5.80 | 7.10 | 20.7ms | 42.23ms | model | log | config | w/ postprocess | w/o postprocess |

| PicoDet-L | 640*640 | 42.6 | 59.2 | 5.80 | 16.81 | 62.5ms | 108.1ms | model | log | config | w/ postprocess | w/o postprocess |

Table Notes:

- Latency: All our models test on

Intel core i7 10750HCPU with MKLDNN by 12 threads andQualcomm Snapdragon 865(4xA77+4xA55)with 4 threads by arm8 and with FP16. In the above table, test CPU latency on Paddle-Inference and testing Mobile latency withLite->Paddle-Lite. - PicoDet is trained on COCO train2017 dataset and evaluated on COCO val2017. And PicoDet used 4 GPUs for training and all checkpoints are trained with default settings and hyperparameters.

- Benchmark test: When testing the speed benchmark, the post-processing is not included in the exported model, you need to set

-o export.benchmark=Trueor manually modify runtime.yml.

Benchmark of Other Models

| Model | Input size | mAPval 0.5:0.95 |

mAPval 0.5 |

Params (M) |

FLOPS (G) |

LatencyNCNN (ms) |

|---|---|---|---|---|---|---|

| YOLOv3-Tiny | 416*416 | 16.6 | 33.1 | 8.86 | 5.62 | 25.42 |

| YOLOv4-Tiny | 416*416 | 21.7 | 40.2 | 6.06 | 6.96 | 23.69 |

| PP-YOLO-Tiny | 320*320 | 20.6 | - | 1.08 | 0.58 | 6.75 |

| PP-YOLO-Tiny | 416*416 | 22.7 | - | 1.08 | 1.02 | 10.48 |

| Nanodet-M | 320*320 | 20.6 | - | 0.95 | 0.72 | 8.71 |

| Nanodet-M | 416*416 | 23.5 | - | 0.95 | 1.2 | 13.35 |

| Nanodet-M 1.5x | 416*416 | 26.8 | - | 2.08 | 2.42 | 15.83 |

| YOLOX-Nano | 416*416 | 25.8 | - | 0.91 | 1.08 | 19.23 |

| YOLOX-Tiny | 416*416 | 32.8 | - | 5.06 | 6.45 | 32.77 |

| YOLOv5n | 640*640 | 28.4 | 46.0 | 1.9 | 4.5 | 40.35 |

| YOLOv5s | 640*640 | 37.2 | 56.0 | 7.2 | 16.5 | 78.05 |

- Testing Mobile latency with code: MobileDetBenchmark.

Quick Start

Requirements:

- PaddlePaddle >= 2.2.2

Installation

Training and Evaluation

- Training model on single-GPU:

# training on single-GPU

export CUDA_VISIBLE_DEVICES=0

python tools/train.py -c configs/picodet/picodet_s_320_coco_lcnet.yml --eval

If the GPU is out of memory during training, reduce the batch_size in TrainReader, and reduce the base_lr in LearningRate proportionally. At the same time, the configs we published are all trained with 4 GPUs. If the number of GPUs is changed to 1, the base_lr needs to be reduced by a factor of 4.

- Training model on multi-GPU:

# training on multi-GPU

export CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7

python -m paddle.distributed.launch --gpus 0,1,2,3,4,5,6,7 tools/train.py -c configs/picodet/picodet_s_320_coco_lcnet.yml --eval

- Evaluation:

python tools/eval.py -c configs/picodet/picodet_s_320_coco_lcnet.yml \

-o weights=https://paddledet.bj.bcebos.com/models/picodet_s_320_coco_lcnet.pdparams

- Infer:

python tools/infer.py -c configs/picodet/picodet_s_320_coco_lcnet.yml \

-o weights=https://paddledet.bj.bcebos.com/models/picodet_s_320_coco_lcnet.pdparams

Detail also can refer to Quick start guide.

Deployment

Export and Convert Model

1. Export model

cd PaddleDetection

python tools/export_model.py -c configs/picodet/picodet_s_320_coco_lcnet.yml \

-o weights=https://paddledet.bj.bcebos.com/models/picodet_s_320_coco_lcnet.pdparams \

--output_dir=output_inference

- If no post processing is required, please specify:

-o export.post_process=False(if -o has already appeared, delete -o here) or manually modify corresponding fields in runtime.yml. - If no NMS is required, please specify:

-o export.nms=Trueor manually modify corresponding fields in runtime.yml. Many scenes exported to ONNX only support single input and fixed shape output, so if exporting to ONNX, it is recommended not to export NMS.

2. Convert to PaddleLite (click to expand)

- Install Paddlelite>=2.10:

pip install paddlelite

- Convert model:

# FP32

paddle_lite_opt --model_dir=output_inference/picodet_s_320_coco_lcnet --valid_targets=arm --optimize_out=picodet_s_320_coco_fp32

# FP16

paddle_lite_opt --model_dir=output_inference/picodet_s_320_coco_lcnet --valid_targets=arm --optimize_out=picodet_s_320_coco_fp16 --enable_fp16=true

3. Convert to ONNX (click to expand)

- Install Paddle2ONNX >= 0.7 and ONNX > 1.10.1, for details, please refer to Tutorials of Export ONNX Model

pip install onnx

pip install paddle2onnx==0.9.2

- Convert model:

paddle2onnx --model_dir output_inference/picodet_s_320_coco_lcnet/ \

--model_filename model.pdmodel \

--params_filename model.pdiparams \

--opset_version 11 \

--save_file picodet_s_320_coco.onnx

-

Simplify ONNX model: use onnx-simplifier to simplify onnx model.

- Install onnxsim >= 0.4.1:

pip install onnxsim- simplify onnx model:

onnxsim picodet_s_320_coco.onnx picodet_s_processed.onnx

- Deploy models

| Model | Input size | ONNX(w/o postprocess) | Paddle Lite(fp32) | Paddle Lite(fp16) |

|---|---|---|---|---|

| PicoDet-XS | 320*320 | ( w/ postprocess) | ( w/o postprocess) | model | model |

| PicoDet-XS | 416*416 | ( w/ postprocess) | ( w/o postprocess) | model | model |

| PicoDet-S | 320*320 | ( w/ postprocess) | ( w/o postprocess) | model | model |

| PicoDet-S | 416*416 | ( w/ postprocess) | ( w/o postprocess) | model | model |

| PicoDet-M | 320*320 | ( w/ postprocess) | ( w/o postprocess) | model | model |

| PicoDet-M | 416*416 | ( w/ postprocess) | ( w/o postprocess) | model | model |

| PicoDet-L | 320*320 | ( w/ postprocess) | ( w/o postprocess) | model | model |

| PicoDet-L | 416*416 | ( w/ postprocess) | ( w/o postprocess) | model | model |

| PicoDet-L | 640*640 | ( w/ postprocess) | ( w/o postprocess) model | model |

Deploy

| Infer Engine | Python | C++ | Predict With Postprocess |

|---|---|---|---|

| OpenVINO | Python | C++(postprocess coming soon) | ✔︎ |

| Paddle Lite | - | C++ | ✔︎ |

| Android Demo | - | Paddle Lite | ✔︎ |

| PaddleInference | Python | C++ | ✔︎ |

| ONNXRuntime | Python | Coming soon | ✔︎ |

| NCNN | Coming soon | C++ | ✘ |

| MNN | Coming soon | C++ | ✘ |

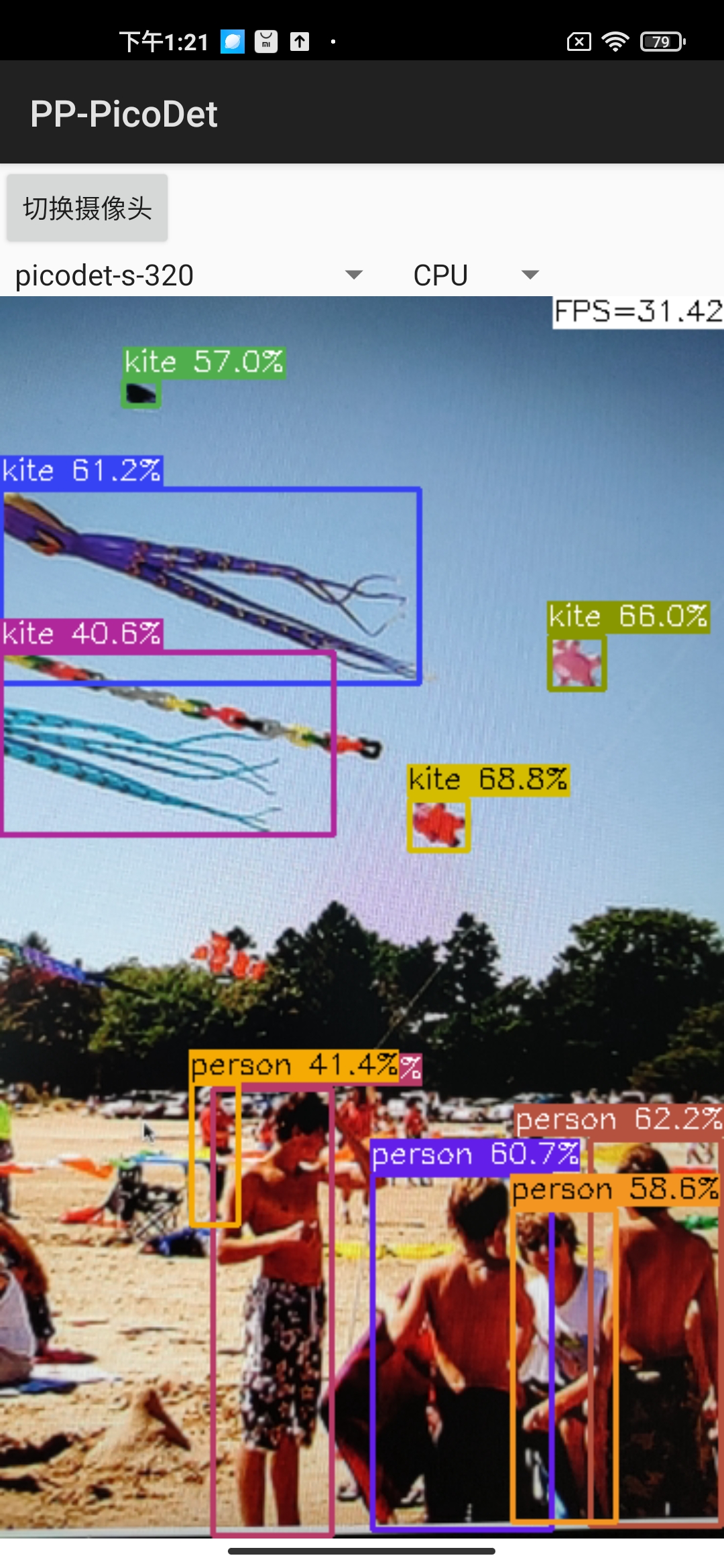

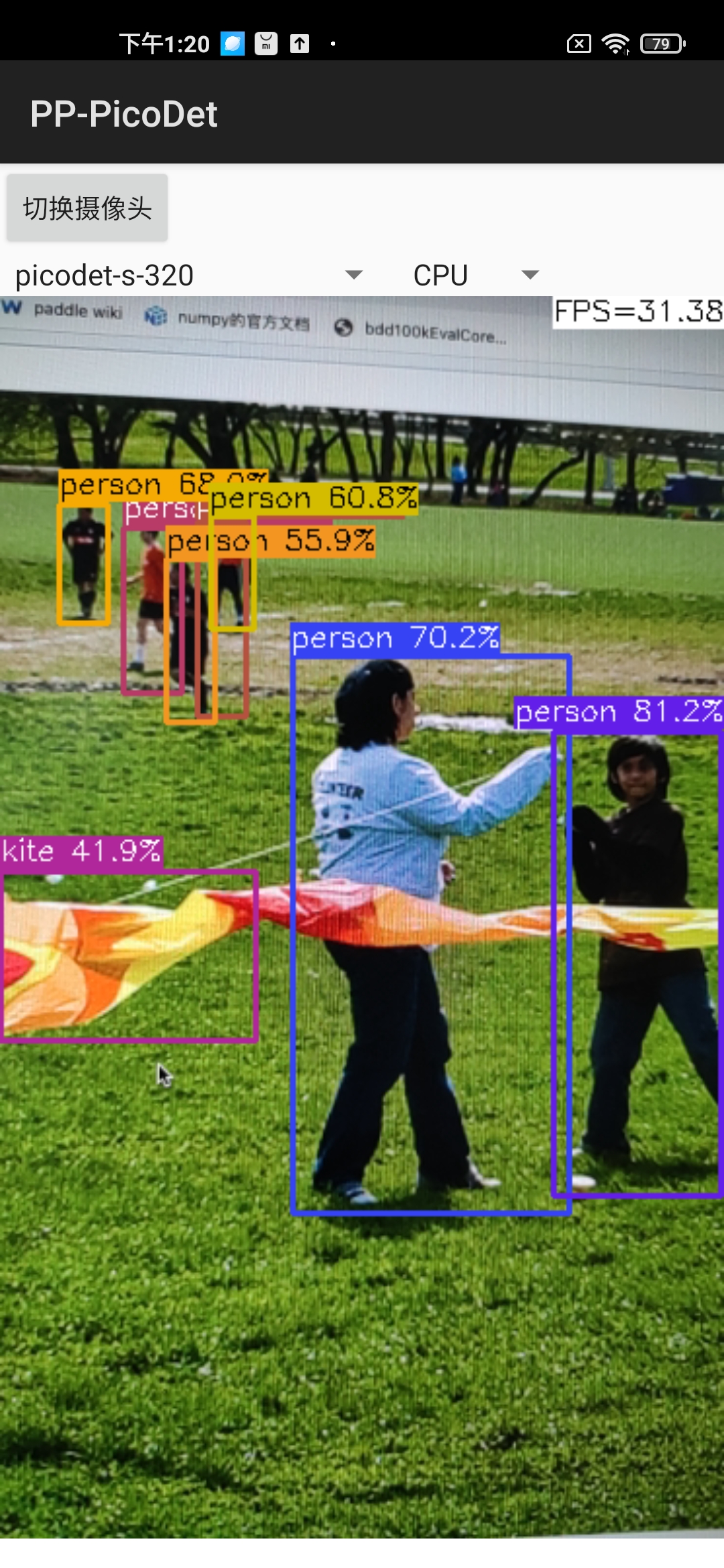

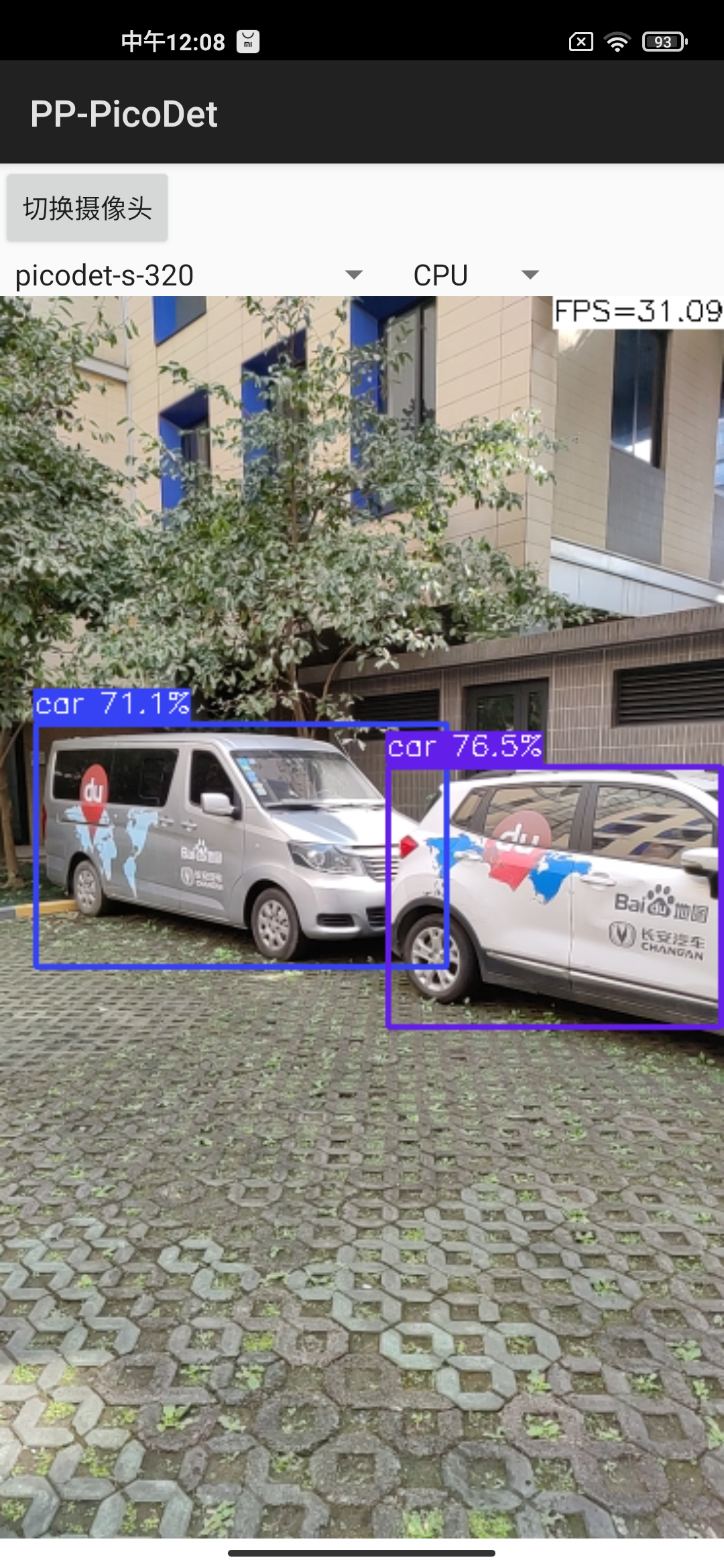

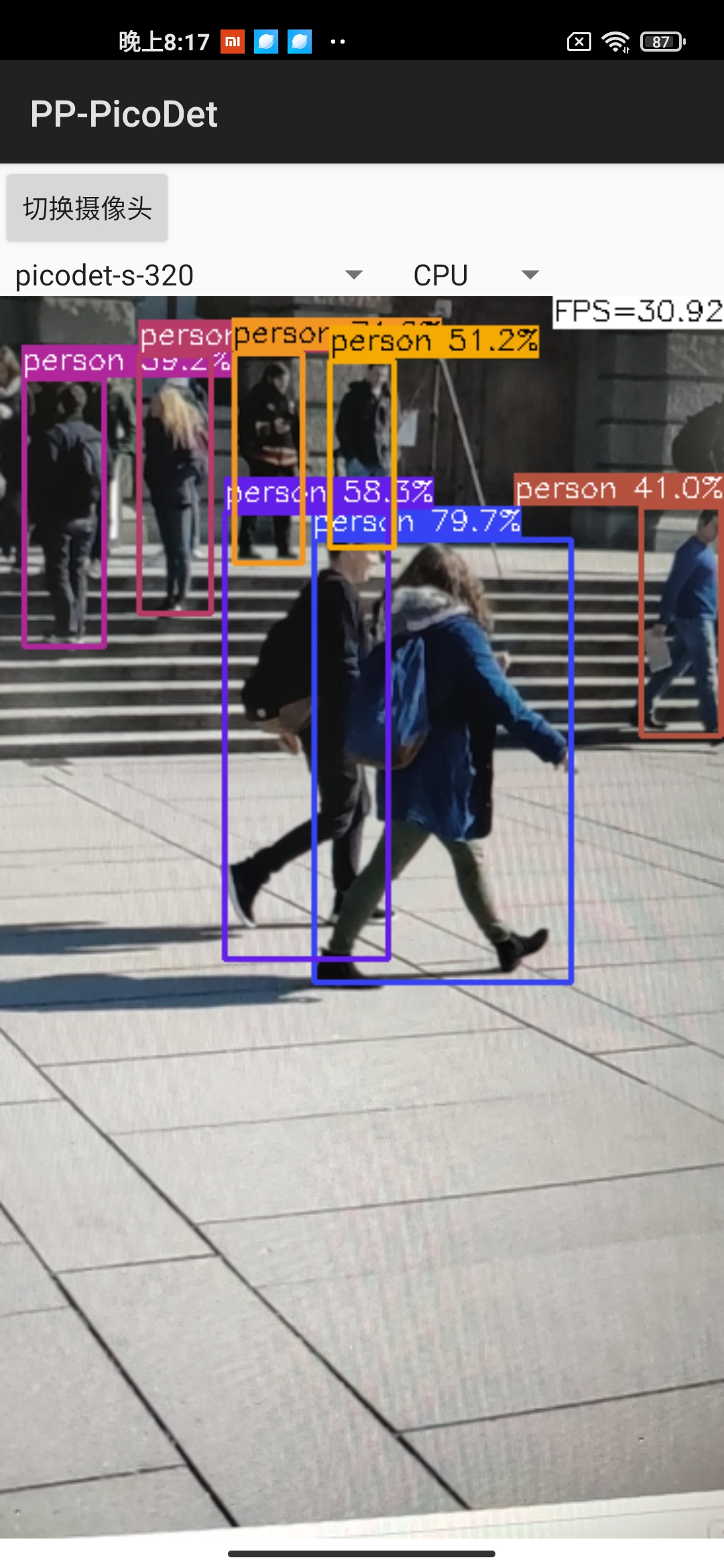

Android demo visualization:

Quantization

Requirements:

- PaddlePaddle >= 2.2.2

- PaddleSlim >= 2.2.2

Install:

pip install paddleslim==2.2.2

Quant aware

Configure the quant config and start training:

python tools/train.py -c configs/picodet/picodet_s_416_coco_lcnet.yml \

--slim_config configs/slim/quant/picodet_s_416_lcnet_quant.yml --eval

- More detail can refer to slim document

- Quant Aware Model ZOO:

| Quant Model | Input size | mAPval 0.5:0.95 |

Configs | Weight | Inference Model | Paddle Lite(INT8) |

|---|---|---|---|---|---|---|

| PicoDet-S | 416*416 | 31.5 | config | slim config | model | w/ postprocess | w/o postprocess | w/ postprocess | w/o postprocess |

Unstructured Pruning

Tutorial:

Please refer this documentation for details such as requirements, training and deployment.

Application

-

Pedestrian detection: model zoo of

PicoDet-S-Pedestrianplease refer to PP-TinyPose -

Mainbody detection: model zoo of

PicoDet-L-Mainbodyplease refer to mainbody detection

FAQ

Out of memory error.

Please reduce the batch_size of TrainReader in config.

How to transfer learning.

Please reset pretrain_weights in config, which trained on coco. Such as:

pretrain_weights: https://paddledet.bj.bcebos.com/models/picodet_l_640_coco_lcnet.pdparams

The transpose operator is time-consuming on some hardware.

Please use PicoDet-LCNet model, which has fewer transpose operators.

How to count model parameters.

You can insert below code at here to count learnable parameters.

params = sum([

p.numel() for n, p in self.model. named_parameters()

if all([x not in n for x in ['_mean', '_variance']])

]) # exclude BatchNorm running status

print('params: ', params)

Cite PP-PicoDet

If you use PicoDet in your research, please cite our work by using the following BibTeX entry:

@misc{yu2021pppicodet,

title={PP-PicoDet: A Better Real-Time Object Detector on Mobile Devices},

author={Guanghua Yu and Qinyao Chang and Wenyu Lv and Chang Xu and Cheng Cui and Wei Ji and Qingqing Dang and Kaipeng Deng and Guanzhong Wang and Yuning Du and Baohua Lai and Qiwen Liu and Xiaoguang Hu and Dianhai Yu and Yanjun Ma},

year={2021},

eprint={2111.00902},

archivePrefix={arXiv},

primaryClass={cs.CV}

}